Best AI Coding Agent for iOS Development (2026)

In December 2025, I asked ChatGPT how to automatically block apps after a timer expires in iOS. The response was clear:

"Programmatic auto-blocking after a certain time is not supported by the Screen Time API. The DeviceActivity token is opaque and cannot be used once your app is closed or in the background."

Claude said the same thing. So did Cursor's AI. Three tools, same answer: your app is impossible.

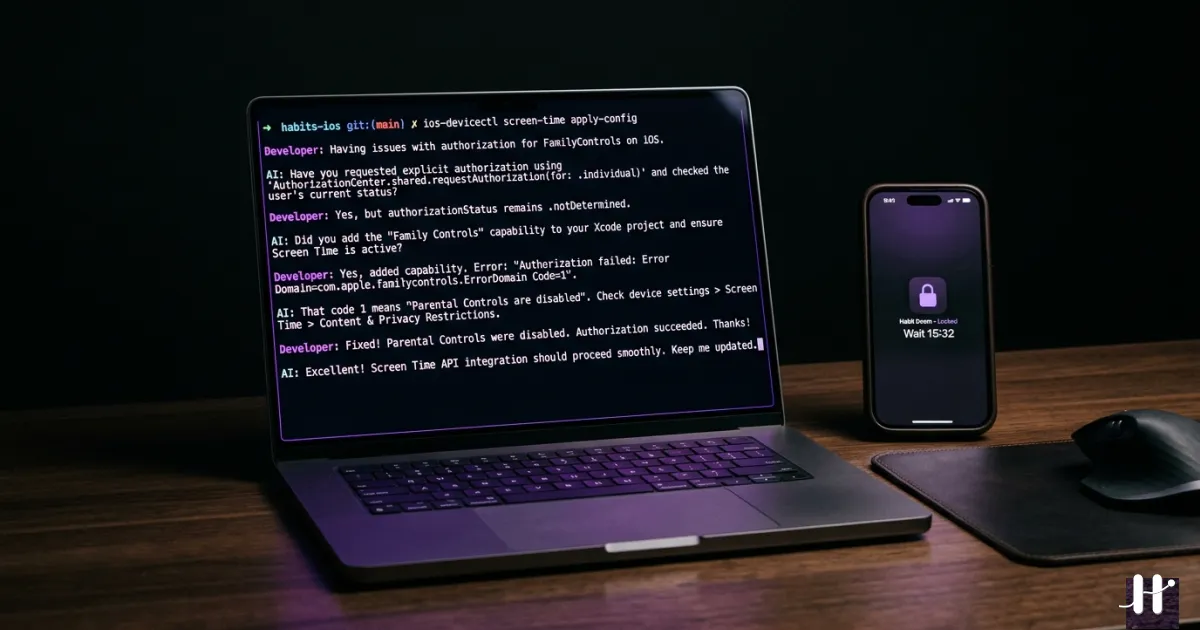

Four months later, Habit Doom is on the App Store. It auto-blocks apps. It works in the background. It does exactly what three AI agents told me could not be done.

Here is what happened.

What I Was Building

Before I get into the AI tools, let me explain what made this app so hard to build.

Habit Doom locks your distracting apps (Instagram, TikTok, YouTube) until you complete your daily habits. Not a timer. Not a gentle reminder. A hard lock at the operating system level. You physically cannot open Instagram until you have done your morning routine.

To build this on iOS, you need Apple's Screen Time API. And the Screen Time API is not one thing. It is three separate frameworks that need to work together:

- FamilyControls. Asks the user for permission to monitor and block apps.

- ManagedSettings. Applies the actual blocks (called "shields") to selected apps.

- DeviceActivityMonitor. Watches for time-based events and triggers actions.

Here is the catch: these frameworks do not all run inside your app. The DeviceActivityMonitor runs as a separate process called an "extension." Think of it as a tiny background program that iOS manages independently. Your main app and this extension need to share data through something called "App Groups," a shared storage space that both can access.

If that sounds complicated, it is. And it is where every AI tool I tried started to break.

I had just finished an iOS development course. I knew Swift and SwiftUI. I had never shipped an app. Here is how each AI tool performed across four months of building.

Cursor (Nov–Dec 2025)

Cursor was my first AI coding tool. I used it to build the first version of Habit Doom.

What Cursor did well:

Cursor was fast. It scaffolded the entire UI in days: habit creation screens, settings, navigation, the data model. For standard SwiftUI code, it was significantly faster than writing everything by hand.

// Cursor generated clean SwiftUI views like this quickly

struct HabitRow: View {

let habit: Habit

var body: some View {

HStack {

Image(systemName: habit.icon)

.foregroundColor(.purple)

VStack(alignment: .leading) {

Text(habit.name)

.font(.headline)

Text("Current Streak: \(habit.streak) days")

.font(.caption)

.foregroundColor(.secondary)

}

}

}

}

For lists, navigation stacks, forms, animations, and state management, Cursor handled it. If you are building a standard iOS app without complex system integrations, Cursor is a genuinely good tool.

Where Cursor broke down:

The Screen Time API. Cursor works by predicting the next line of code based on your current file. But the Screen Time API requires understanding how three separate targets (your app, the Shield extension, and the Monitor extension) coordinate through shared state.

Cursor could not hold that in its head. It would suggest API calls that looked right but used wrong types:

// What Cursor suggested (does not compile)

let store = ManagedSettingsStore()

store.shield.applications = selectedApps // Wrong — needs Set<ApplicationToken>

store.shield.applicationCategories = .all // Wrong — needs .specific(tokens)

// What actually works

let store = ManagedSettingsStore()

store.shield.applications = selection.applicationTokens

store.shield.applicationCategories = .specific(selection.categoryTokens)

On December 29, 2025, I committed: "Tested ScreenTime feature but failed. Cleaned the rest of the code." That commit included two troubleshooting documents I had written (CRITICAL_FIX_NEEDED.md and EXTENSION_NOT_TRIGGERING_ISSUE.md) cataloging everything that was broken.

The extension was built. The code compiled. But iOS never triggered it. Cursor could not figure out why.

ChatGPT and Claude in the Browser (Dec 2025)

After Cursor hit a wall, I tried a different approach. I copied my Swift files into ChatGPT and Claude, explained the problem, and asked for solutions.

What they did well:

Both were better than Cursor at explaining iOS architecture. They understood the privacy model, the concept of opaque tokens, why Apple designed the API the way they did. If I needed to learn why something worked a certain way, ChatGPT and Claude were helpful teachers.

Where they broke down:

Three specific failures that cost me weeks.

Problem 1: The extension never triggers.

My DeviceActivityMonitor extension was built and embedded correctly. But iOS never called it. I wrote up the issue in detail:

"DeviceActivityReport extensions are NOT automatically triggered by iOS. There is no public API to request a DeviceActivityReport from the main app. The extension only runs for custom contexts."

ChatGPT suggested checking build phases and embedding settings. Claude suggested verifying the extension's Info.plist. Both were reasonable debugging steps, but neither identified the actual issue, which was that I was using the wrong type of extension for what I needed. I needed a DeviceActivityMonitor (which watches schedules), not a DeviceActivityReport (which generates usage reports).

Problem 2: App icons.

I needed to show the icons of apps the user selected for blocking. The Screen Time API returns opaque ApplicationToken objects. You cannot extract the app name, bundle ID, or icon from them directly. Apple designed it this way for privacy.

I asked both tools how to get the icons. Both said: you cannot. They suggested that other app-blocking apps maintain a pre-built library of app icons that they fall back to.

This was wrong. Apple provides a Label view that renders the token's icon and name natively. Neither ChatGPT nor Claude knew about this because there are very few examples of it online.

Problem 3: Auto-blocking after timer expires.

This was the big one. When a user's earned screen time runs out, Habit Doom needs to automatically re-block their apps, even if the app is closed.

Both ChatGPT and Claude told me this was impossible. The token is opaque. The main app cannot run in the background indefinitely. There is no push notification trigger for re-blocking. Their conclusion: you cannot programmatically re-block apps after a timer expires.

They were describing real constraints. But the conclusion was wrong.

Claude Code (Jan 2026–Present)

In January 2026, I switched to Claude Code, Anthropic's CLI tool that works directly in your project directory.

The difference was immediate. And it came down to one thing: Claude Code reads your entire project, not just the file you paste into a chat window.

Solving the extension trigger problem:

Claude Code read the main app, the extension code, the entitlements files, and the project.pbxproj all at once. It identified that I had set up a DeviceActivityReport extension (for generating usage reports) when I actually needed a DeviceActivityMonitor extension (for watching time-based schedules). It restructured the extension, updated the entitlements, and fixed the App Group configuration in one session.

Solving the app icons problem:

On January 5, 2026, exactly one week after the "failed" commit, I committed: "Fixed the locked app icon issue and removed all code for screentime report."

Claude Code found that Apple's Label view accepts an ApplicationToken and renders its icon and name. It built helper classes (AppIconExtractor, AppIconCaptureHelper, AppIconHelper) that capture and cache these icons for use throughout the app. Three files that ChatGPT and Claude said were impossible to write.

Solving auto-blocking:

This was Claude Code's biggest contribution. It designed a pattern called schedule-chaining:

- When the user earns screen time, start a

DeviceActivityMonitorschedule that ends when the time expires - When the schedule ends, the

intervalDidEndcallback fires (in the extension, not the main app) - The extension re-applies shields (blocks) to all selected apps

- The extension chains the next schedule for the next check

The key insight that the other tools missed: DeviceActivityMonitor extensions are separate processes. They run independently of your main app. iOS keeps them alive. So you do not need your app to be open to re-block apps. The extension does it.

// The schedule-chaining pattern for auto-blocking

// This runs in the DeviceActivityMonitor extension — NOT the main app

class HabitDoomMonitor: DeviceActivityMonitor {

override func intervalDidEnd(for activity: DeviceActivityName) {

// Timer expired — re-block all apps

let store = ManagedSettingsStore()

let tokens = loadTokensFromAppGroup() // Shared storage

store.shield.applications = tokens

// Schedule the next check

chainNextSchedule()

}

func chainNextSchedule() {

let center = DeviceActivityCenter()

// Rotate slot names so schedules don't overwrite each other

let slot = currentSlot % 5

let name = DeviceActivityName("reblockChain_\(slot)")

let schedule = DeviceActivitySchedule(

intervalStart: nowComponents(),

intervalEnd: nextCheckComponents(),

repeats: false

)

try? center.startMonitoring(name, during: schedule)

}

}

Beyond the core features, Claude Code helped debug 21 critical bugs: race conditions between extensions, midnight re-blocking failures, shield conflicts. Problems that only appear when three separate processes share mutable state.

I am still using Claude Code today for every feature and bug fix.

Tools I Have Not Tried

Two other AI coding agents worth knowing about:

OpenAI Codex

OpenAI's cloud-based coding agent. It reads your repository, writes code, and runs tests in a sandboxed Linux environment. The iOS limitation: it cannot compile Swift for iOS, run the simulator, or test against Apple's frameworks. You would need to take its output and integrate it yourself. Potentially useful for pure logic (data models, algorithms, networking code), but untested for anything involving Apple's restricted APIs.

Google Gemini / Jules

Google's Gemini powers the Jules coding agent, which focuses on async GitHub tasks like fixing bugs and opening PRs. Gemini has strong reasoning and a large context window. For iOS development, the tooling is less mature than Claude Code's interactive CLI. Jules works on isolated tasks rather than real-time development sessions. Could be worth trying for well-defined bug fixes, but I have not tested it against Screen Time API challenges.

Why iOS Is Uniquely Hard for AI

If you are building a web app or a standard mobile app, most AI tools work fine. iOS with system-level APIs is a different story. Here is why:

-

Multi-target projects. A Screen Time app is three separate programs (app + two extensions) sharing state. Most AI tools reason about one file at a time.

-

Opaque APIs. Apple hides information deliberately for privacy. Tokens do not contain human-readable data. The "obvious" solution (extract the app name from the token) is intentionally impossible.

-

Almost no documentation. The Screen Time API has sparse official docs and very few community examples. AI models trained on Stack Overflow have almost nothing to learn from.

-

Silent failures. One wrong entitlement and the app compiles, installs, and runs, but the extension never triggers. No error. No crash. Just silence.

-

Extension lifecycle. Extensions are separate processes that iOS can kill and restart at any time. Code that works when the app is open can fail completely in the background.

What I Recommend

Here is my honest recommendation for building an iOS app with AI in 2026:

| Phase | Tool | Use It For |

|---|---|---|

| UI and boilerplate | Cursor | SwiftUI views, navigation, forms, standard patterns |

| Learning and research | ChatGPT or Claude | Understanding concepts, reading docs, "why" questions |

| Actual development | Claude Code | Complex features, multi-file changes, system API integration |

| Async tasks | Codex or Jules | Isolated bug fixes, test generation (worth exploring) |

If your app uses standard iOS APIs (UIKit, SwiftUI, Core Data, networking), Cursor and ChatGPT will get you far. But the moment you touch Apple's restricted frameworks (Screen Time, HealthKit, FamilyControls, DeviceActivity), you need a tool that understands your entire project, not just the file you are looking at.

For me, that tool is Claude Code. Two AI agents told me the core feature of my app was impossible. Claude Code shipped it.

Habit Doom is live on the App Store. The auto-blocking works. The app icons display correctly. All because one AI agent understood what the others could not.

Frequently Asked Questions

Keep Reading

Try Habit Doom

Lock your distracting apps. Complete your habits. Earn your screen time. It takes 30 seconds to set up.